* 딥러닝 session은 Sung Kim님의 강의 (유튜브)를 요약/정리한 내용입니다.

** Sung Kim님의 강의와 자료는 아래의 자료를 참고하고 있습니다.

- Andrew Ng's ML class

- Convolutional Neural Networks for Visual Recognition

- Tensorflow

CONTENTS

- Logistic(regression) classification

- Logistic(regression) classification cost function & gradient decent

- Softmax classification: Multinomial classification

- Learning rate, data preprocessing, overfitting

05-1. Logistic (regression) classification

RECAP

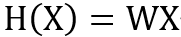

가설 세우기

: W(weight) X(variable)

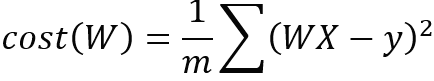

Cost값 구하기

: 예측값과 실제값 차이 구하기

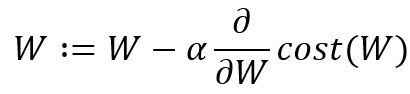

Gradient descent 적용하기

: cost값을 최소화할 수 있는 기울기 찾기

Binary Classification(이진 분류)

: 이진 분류는 y값이 두 가지 값으로만 갖는 것으로 값을 0, 1로 encoding 할 수 있는 것을 말한다. 예시는 Yes/No, Pass/Non-Pass 등이 있다. 일반 Linear regression은 숫자의 범위가 넓기 때문에 두 가지로 분류하기 위해서는 함수의 범위를 0~1로 진행하는 것이 필요하다. 값이 0,1로 나뉘는 경우, y값이 0~1의 값을 갖도록 하기 위해서 sigmoid function을 적용한다.

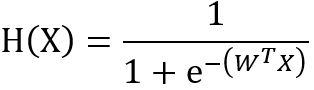

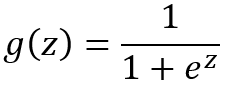

Sigmoid Function(시그모이드 함수)

: 함숫값의 범위가 0~1의 값을 갖도록 하는 함수이다. y값이 나오면 0.5 미만은 0으로 0.5 이상이면 1로 생각한다.

05-2. Logistic (regression) classification: cost function & gradient descent

로지스틱 회귀 분석(분류)에서의 COST 문제

: 선형 회귀에서는 cost가 2차 함수로 최소값을 찾기 쉽게 되어있다. 하지만 sigmoid 함수를 쓴 로지스틱 회귀 분석은 구불구불한 그래프가 나오게 된다. 이때, gradient decent를 적용했을 때 설정에 따라 최소값이 아닌 local minimum을 찾아낼 수 있는 문제가 발생한다.

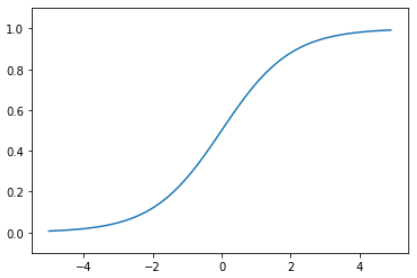

로지스틱 회귀 분석(분류)에서의 Cost Fuction

: e의 상반된 함수 log를 사용한다. y=1일 때, 만약에 H(x)가 1로 예측했다면 cost function은 0으로 최소가 된다. y=1일 때, cost function은 무한대로 가기 때문에 다시 학습하게 될 것이다. 이처럼 적용하면 똑같이 이차 함수처럼 생성되고, gradient descent로 기존 문제점을 해결하여 최소값을 찾을 수 있다.

06-1. Softmax classification: Multinomial classification

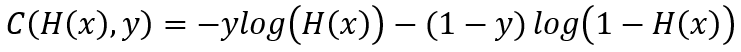

Multinomial classification, multinomial logistic regression(다항 선형 함수)

: y값이 3개 이상의 범주를 가질 때 사용하는 분류/예측 모델이다.

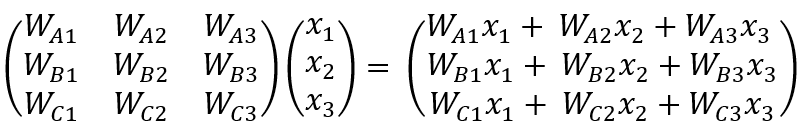

다항 선형 함수 식

: 예를 들어 아래와 같은 Y값이 A, B, C가 있다면 분류 또는 예측하기 위해서는 3개의 식이 필요합니다. 이처럼 3개의 식을 하나의 MATRIX로 나타내게 되면 아래와 같다.

06-2. Softmax classification: softmax and cost function

Multinomial에서 sigmoid는 언제 사용하나?

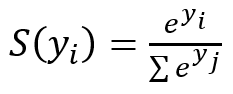

: multinomial classification/regression에서는 sigmoid는 사용하지 않고 softmax를 사용한다.

Softmax 함수의 특징

: 예측한 y값을 0~1 사이로 나타내고, 예측한 모든 y값의 합은 1이 되게 한다. one-hot encoding을 통해 그중 가장 큰 값을 1로 만들고, 나머지는 0으로 변환하여 적절한 y값을 분류/예측한다.

07-1. Learning rate, data preprocessing, overfitting

learning rate이란?

: gradient descent 알고리즘을 적용할 때 최소값을 찾기 위한 step의 간격이다. learning rate이 클 때는 최소값을 찾지 못하고 뛰어넘을 수 있는 overshooting의 문제가 생길 수 있다. 반면 learning rate이 작으면 학습이 오래 걸리거나 local minimum에서 멈추는 경우가 발생할 수 있다. 그러므로 learning rate를 설정할 때는 cost function을 관찰하면서 reasonable한 값을 찾는 것이 중요하다.

Preprocessing(전처리)의 필요성?

: 효과적으로 gradient descent 알고리즘을 적용하기 위해 전처리하는 과정이 필요하다. 예를 들어 변수 x1값이 0~10사이이고, 변수 x2값이 -10000에서 10000사이일 때 cost function은 왜곡돼서 그려지고, gradient descent는 조금만 잘못 움직여도 최소값으로 가는 길을 벗어날 가능성이 높다. 그러므로 normalizing 정규화 등을 통해 데이터가 특정 범위 안에 들어가도록 전처리하는 과정이 필요하다.

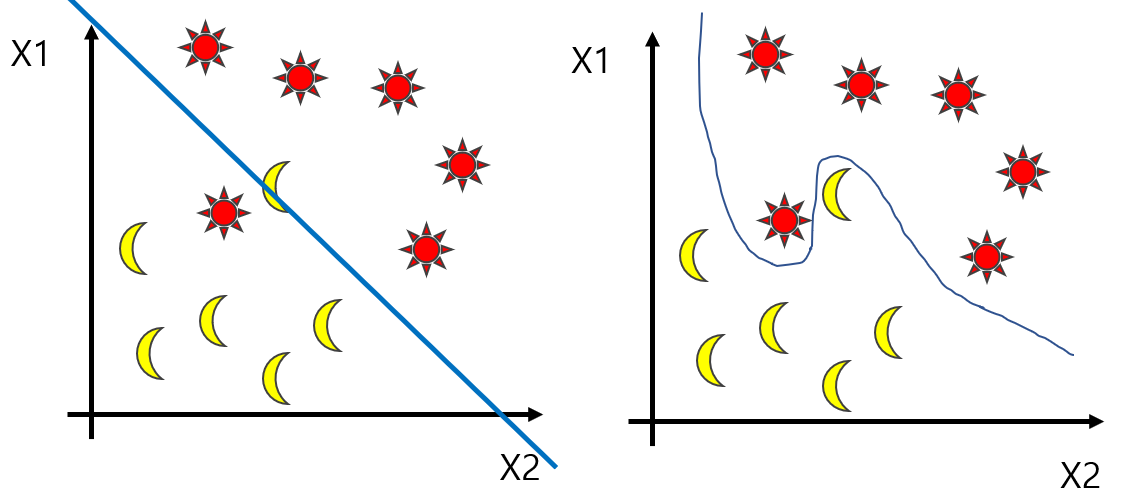

Overfitting(과적합)이란?

: 과적합은 주어진 데이터셋에 과하게 학습되었기 때문에 학습 데이터는 잘 예측/분류하는 반면 새로운 데이터에 대한 예측/분류의 성능이 낮은 경우를 말한다.

Overfitting(과적합)을 해결하기 위한 방법

- 학습 데이터를 늘리는 것

- 변수(feature)의 수를 줄이기

- 일반화(regularization)

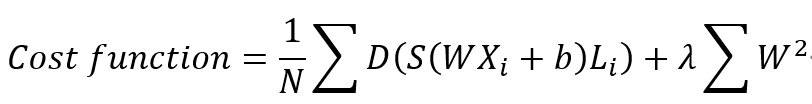

Regularization(일반화)이란?

W(weight) 값이 커질수록 cost function의 굴절이 더 깊어진다. 경사 하강법(gradient descent)을 잘 적용하기 위해서는 굴곡을 펼치기 위해 Weight값을 작게 하는 것이 일반화이다. 최소값을 찾을 수 있도록 lambda를 조절하여 일반화를 진행한다.

07-2. Learning and test data sets

모델의 성능을 파악하는 법?

: 모델의 성능을 파악할 때는 이미 학습에 사용된 데이터는 사용하지 않는다. 데이터가 있는 경우 train 데이터와 test 데이터를 나눠놓고 진행한다. 또 다른 방법으로는 train data, validation data, test data로 세 가지로 나누는 경우도 있는데 validation 데이터는 gradient descent에서 사용하는 learning rate 값이나 일반화에 사용되는 lambda를 튜닝하는데 사용한다.

Online learning이란?

: 데이터가 많을 때 나눠서 모델을 학습하는 경우이다. 예를 들어 100만 개의 데이터가 있다면 10만 개씩 학습시키면서 모델을 만들어가는 방법이다. online learning을 하는 경우에는 항상은 아니지만 새로운 데이터를 잘 예측/분류하는 장점이 있다.

'A PIECE OF DATA > 🍕 딥러닝' 카테고리의 다른 글

| [딥러닝 기초] 머신러닝 기초④(ReLU, Vanishing gradient, dropout, ensemble) (0) | 2021.09.07 |

|---|---|

| [딥러닝 기초] 머신러닝 기초③(Neural Nets for XOR) (0) | 2021.09.03 |

| [딥러닝 기초] 머신러닝 기초①(linear regression, Cost function, Multivariable linear regression) (0) | 2021.08.26 |